Programmable

Autonomous Workers

The operating system for autonomous AI agents. Multi-channel, multi-agent, safety-first. Every action follows a strict six-stage pipeline — the LLM reasons, the system executes.

Six-Stage Safety Pipeline

Every action — without exception — passes through six validated stages. The LLM generates plans. The system executes them. They never cross.

Sanitize input. Rate limit. Detect prompt injection across 15+ patterns.

LLM generates strict JSON plan. No execution, no side effects, reasoning only.

Schema check. Safety policy. Blockchain simulation. Risk scoring.

Tools, APIs, browser, blockchain. Sandboxed and scoped. No raw LLM access.

Confirm results. Self-heal failures. Diagnose → fix → retry → escalate.

Full audit trail with Trace Explorer. Auto-redacted secrets. JSONL structured output.

Everything You Need,

Nothing You Don't

Production-grade components that work together. Extend with skills, MCP servers, or custom tools.

Three Agent Modes

Supervised, Autonomous, and Free mode. Per-user toggle. Free mode requires two safety warning layers to activate. Validation pipeline runs in all modes.

Browser Automation

Puppeteer-based control. Navigate, click, type, extract, screenshot. Full headless browser in the agent pipeline.

Multi-Agent Orchestration

Agent registry with capability routing. Delegate tasks, coordinate work, enforce depth limits across sub-agents.

Vector Memory

Persistent semantic memory. TF-IDF embeddings with cosine similarity search. Scoped by session, user, or global.

Blockchain Ready

Optional Solana integration: transfers, balance checks, SPL tokens, transaction simulation. Extensible to any chain.

MCP Protocol

Connect to any Model Context Protocol server. Discover and invoke external tools without writing code.

DAG Workflows

Visual workflow engine. Triggers, conditions, parallel branches, error handling. No external orchestrator needed.

Key Management

AES-256-GCM encryption at rest. Ed25519 signing. Keys zeroed after use. Never logged, never leaked.

Self-Healing

Intelligent failure recovery. Diagnose root cause, apply fix strategy, retry intelligently, escalate only when needed.

Works Everywhere You Do

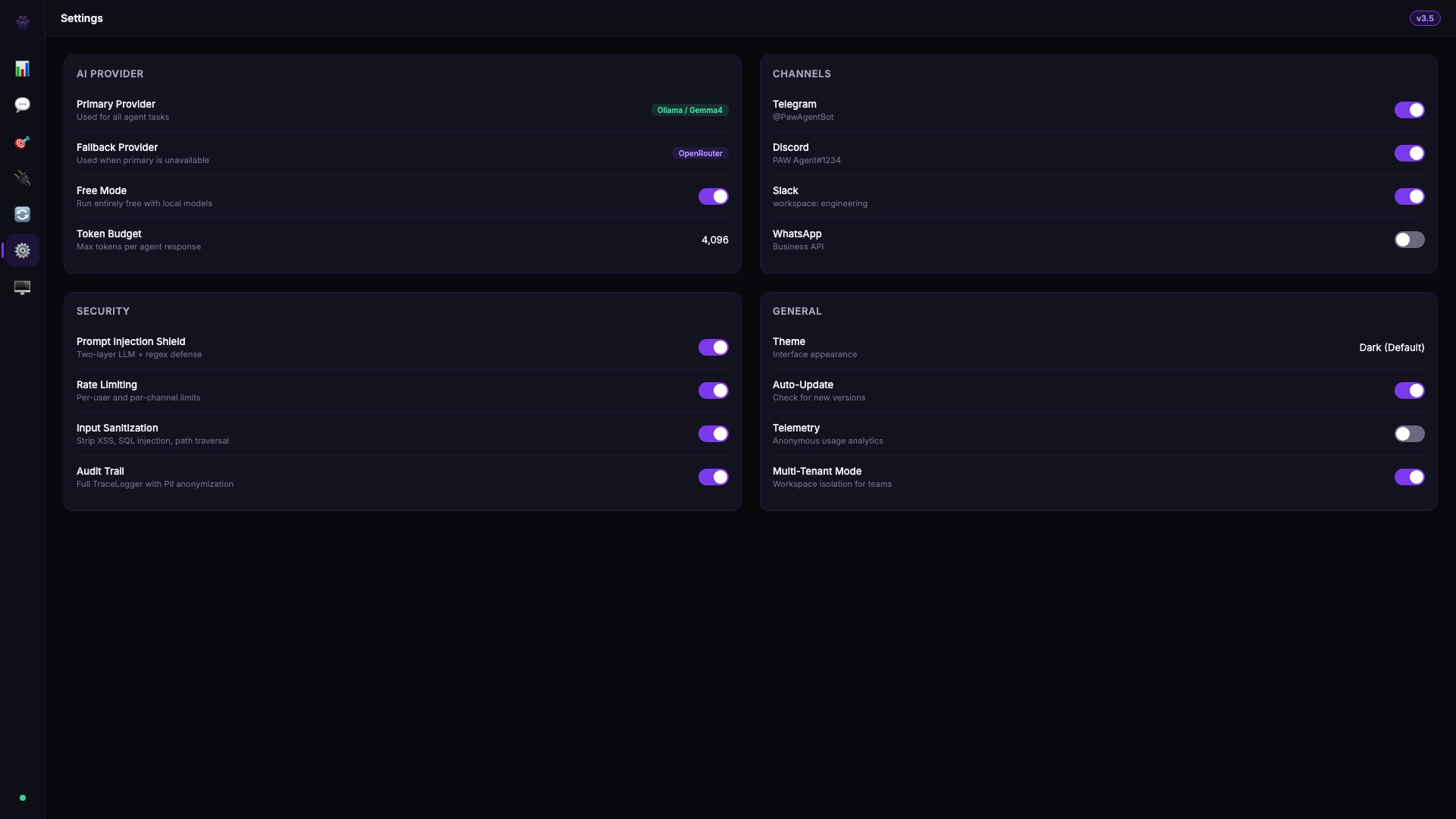

Eleven channels. One brain. All sharing the same tools, memory, and safety pipeline. Install only what you need.

Telegram via Telegraf · Discord via discord.js · Slack via Bolt · WhatsApp via Baileys · Email via nodemailer + IMAP · SMS via Twilio · WebChat via WebSocket · Webhooks via HTTP · LINE via Messaging API · Reddit via OAuth2 · Matrix via Client-Server API

100% Free AI with Ollama

Run Gemma 4 locally via Ollama — zero API keys, zero cost, unlimited usage. PAW auto-detects Ollama at startup and routes through the same safety pipeline as every other provider.

# Install Ollama (one command) $ curl -fsSL https://ollama.com/install.sh | sh # Pull Gemma 4 — Google's latest open model $ ollama pull gemma4 # That's it. PAW auto-connects on startup. $ npm start Ollama detected · gemma4 ready · $0.00/request

Zero Cost

No API keys, no billing, no rate limits. Run as many requests as your hardware allows.

Complete Privacy

Every prompt and response stays on your machine. Nothing leaves your network.

Auto-Detection

PAW pings localhost:11434 on startup. If Ollama is running, it's available instantly.

Works with any Ollama model: Gemma 4, Llama 3, Mistral, Phi-3, CodeGemma, and more. Switch between local and cloud providers on the fly with automatic failover.

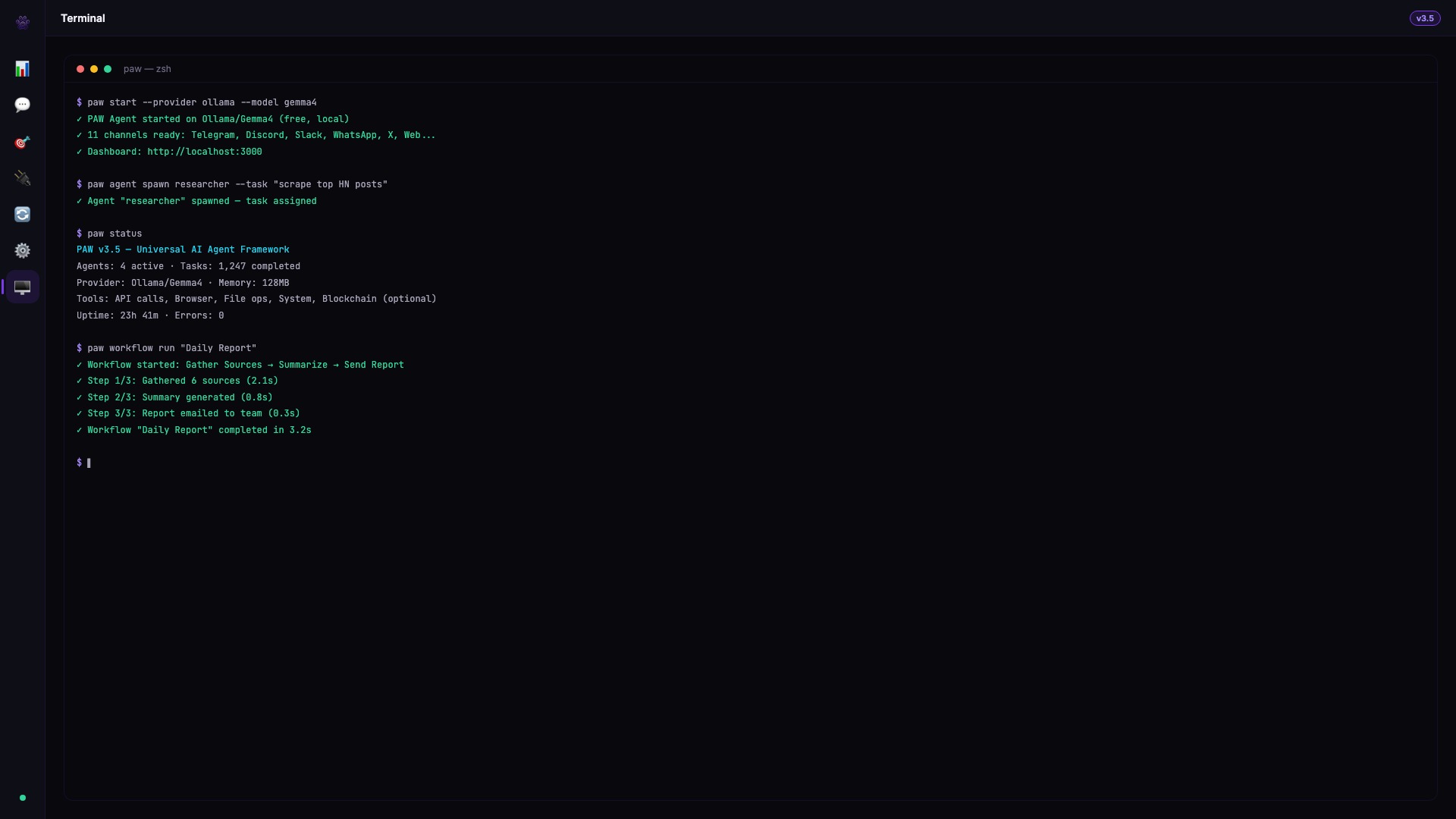

PAW Hub

A desktop operating system for your AI agents. Mission Control, plugins, workflows, and cross-app sync — all in one Electron-powered interface.

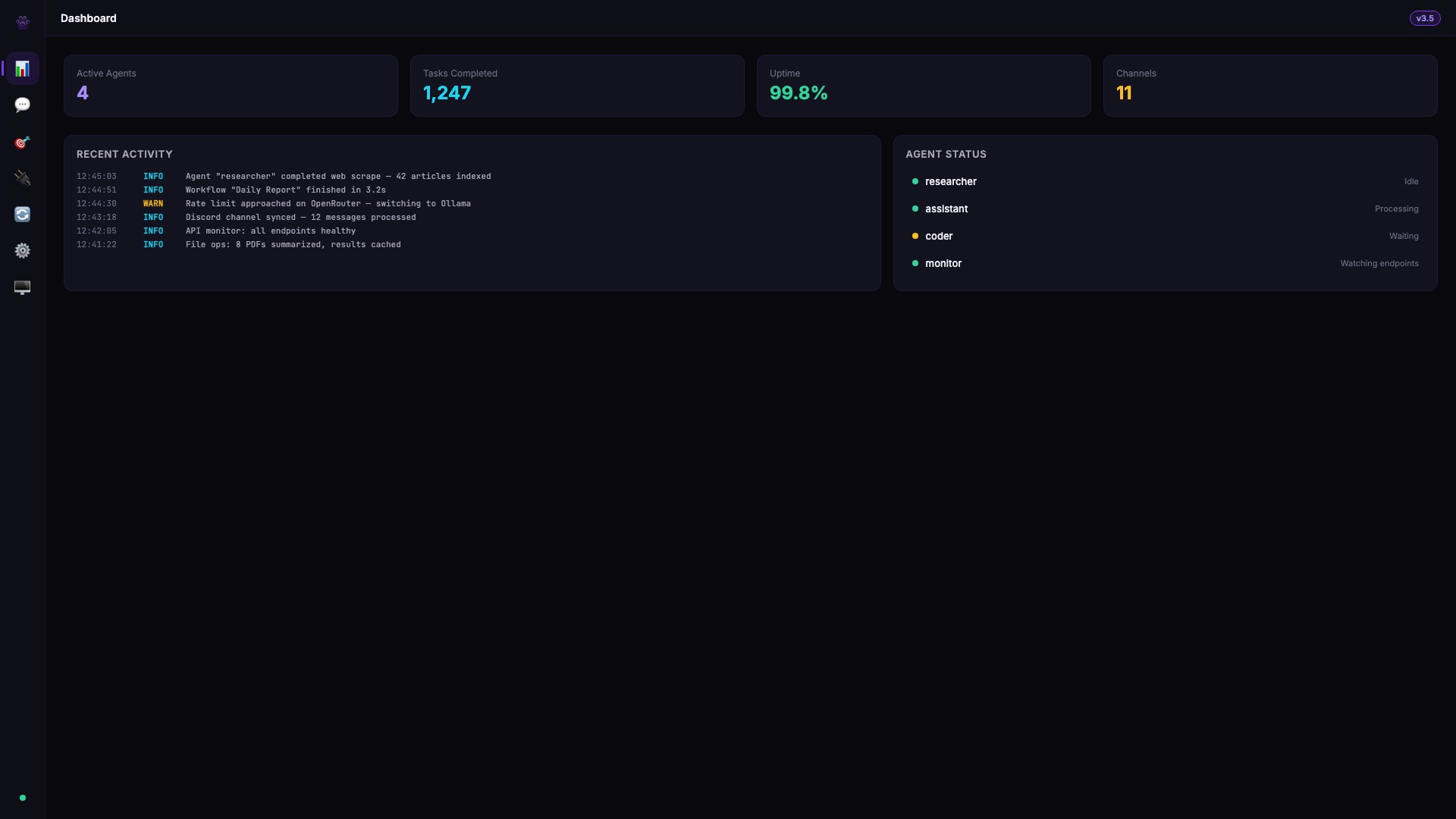

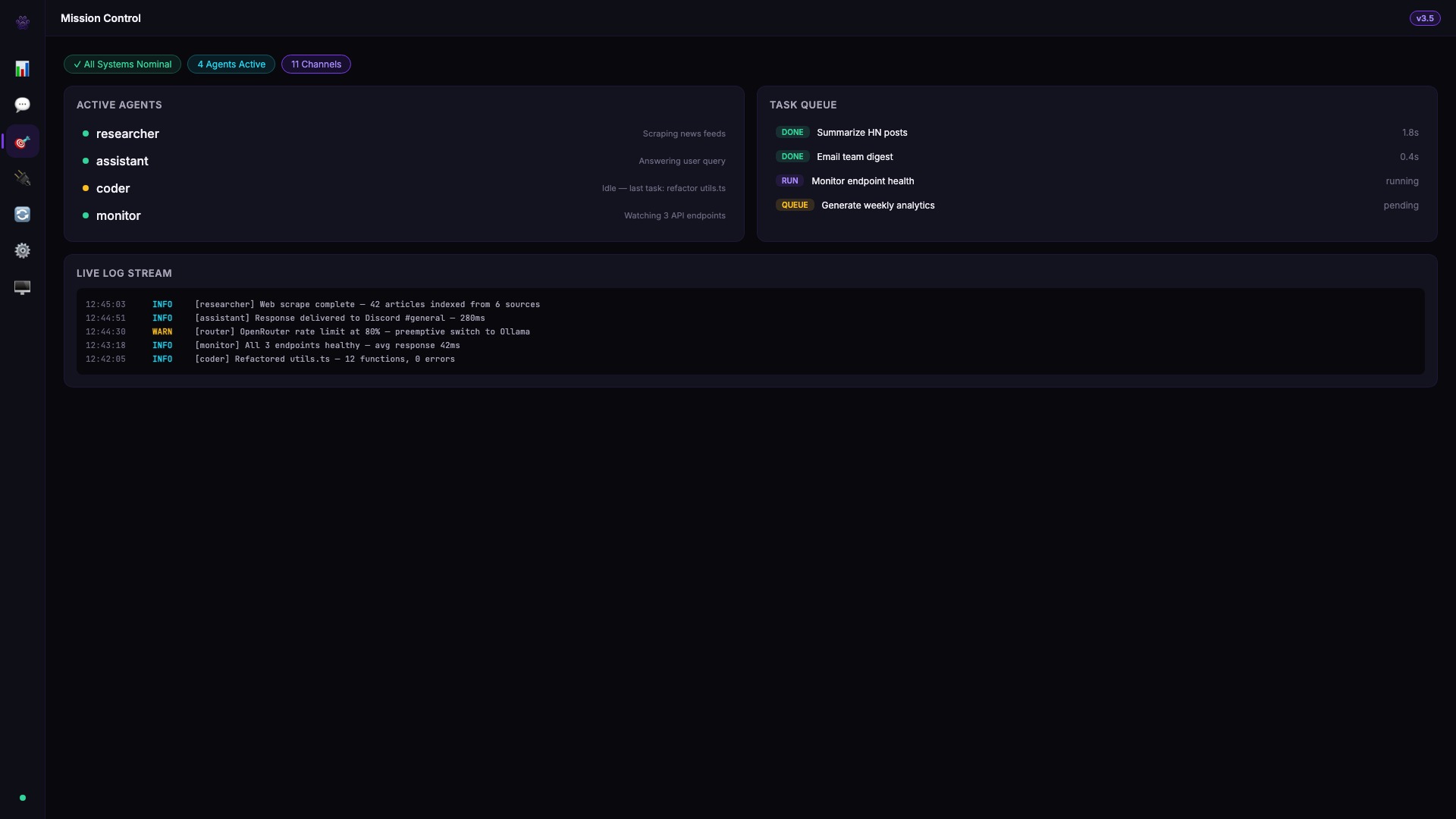

Mission Control

Real-time agent monitoring. Track tasks, metrics, alerts, and logs across all your agents in one view.

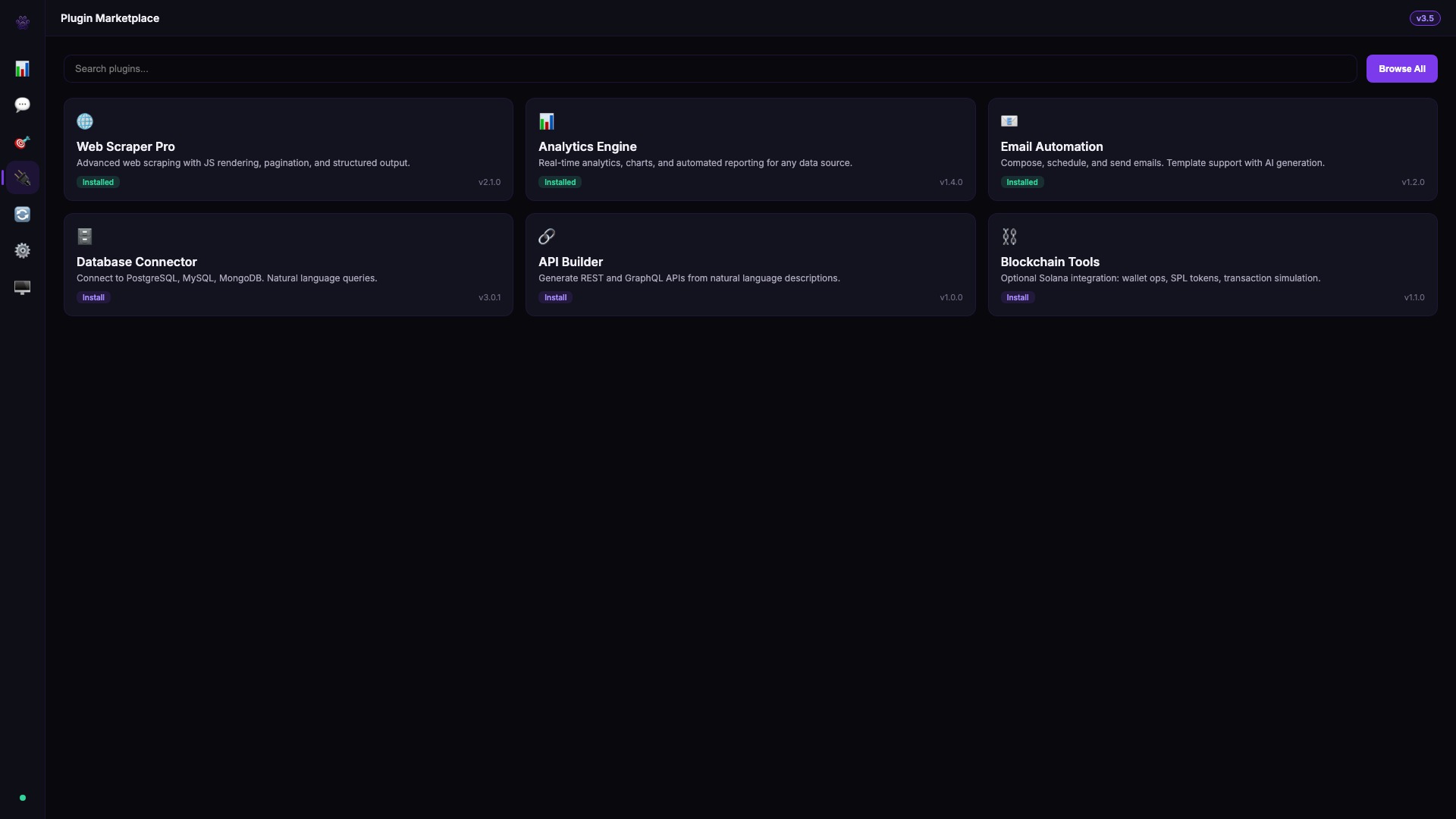

Plugin Marketplace

Install and manage plugins with hooks into the agent pipeline. DeFi alerts, analytics, GitHub, and custom plugins.

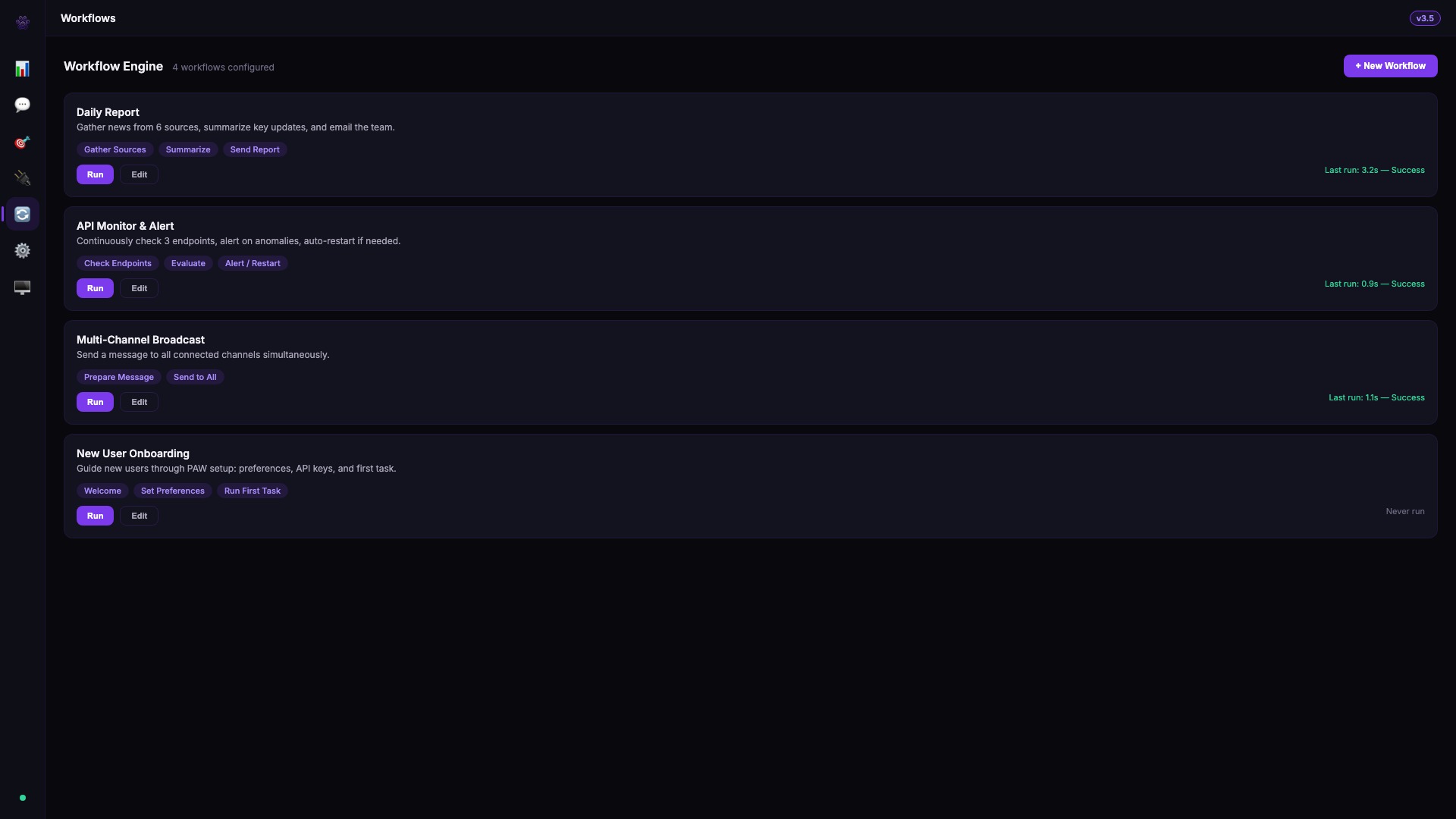

Workflow Engine

Build and run multi-step workflows from templates. Daily portfolio checks, onboarding flows, price alerts.

Cross-App Sync

Shared memory across all channels. Actions on Telegram sync to Discord, Hub, and everywhere else in real-time.

Built-in CLI

Full terminal interface inside Hub. Run agent commands, check status, manage plugins — without leaving the app.

OAuth2 & Multi-Tenant

Enterprise SSO, tenant isolation, plan-based limits, and role-based access. Built for teams and organizations.

Any Language. Any Tool. Optional Purp SCL.

PAW agents execute JavaScript, TypeScript, Python, Rust, and any language you throw at them via the api, js, and system execution modes.

For blockchain developers, PAW also ships with an optional Solana smart contract language — Purp SCL v1.2.1.

Write, compile, and deploy programs without leaving the chat.

program TokenVault { } account VaultState { owner: pubkey balance: u64 is_locked: bool } instruction Deposit { accounts: #[mut] vault_state #[signer] depositor args: amount: u64 body: require(amount > 0, InsufficientFunds) vault_state.balance += amount emit(DepositMade, { depositor: depositor.key, amount: amount }) } event DepositMade { depositor: pubkey amount: u64 } error VaultErrors { InsufficientFunds = "Not enough funds" Unauthorized = "Only the owner" }

Compiler Pipeline

- ✓ Full type system: u8–u128, i8–i128, f32, f64, bool, string, pubkey, bytes

- ✓ v1.1 operators: ** exponentiation, ?? nullish coalescing, ... spread

- ✓ Account attributes: #[mut], #[signer], #[init] with auto-generated context structs

- ✓ SPL token operations: transfer, mint_to, burn, approve, revoke

- ✓ Anchor-compatible Rust output with proper CPI patterns

- ✓ TypeScript SDK with typed methods per instruction

- ✓ Anchor-compatible IDL JSON generation

- ✓ Purp.toml project config with dependency management

- ✓ Backward compatible with legacy JSON format and v0.3 syntax

Why PAW?

Safety-first architecture where the LLM plans and the system executes.

No exceptions, no shortcuts, every action traceable.

Safety is Non-Negotiable

The validation pipeline runs on every action, in every mode — even Free mode. You can remove confirmation gates, but validation, simulation, and logging never stop.

Strict LLM/Execution Split

The LLM produces a JSON plan. The system validates it, then executes. The LLM never touches tools directly — eliminating an entire class of prompt injection attacks.

Three Modes of Autonomy

Supervised confirms everything. Autonomous auto-executes low/medium risk. Free removes all gates — but requires passing two safety warning layers to activate. Your choice, your control.

Full Audit Trail

The Trace Explorer logs every phase of every action with automatic secret redaction. Reconstruct exactly what happened, what the LLM reasoned, and why.

Intelligent Self-Healing

When an action fails, PAW diagnoses the failure type, determines if it's recoverable, applies a fix strategy, and only escalates when it genuinely can't recover.

Purp SCL First-Class

Parse .purp files, validate, compile to Anchor Rust, generate TypeScript SDKs and IDL — all within the agent pipeline. No SCL integration like it.

Semantic Memory

Vector memory with TF-IDF scoring, scoped by session, user, or global, persisted to disk. Agents remember what matters across conversations.

Extensible by Design

Skill files, MCP protocol, custom tools, DAG workflows, multi-agent orchestration. PAW grows with your needs without code changes.

Real-Time Agent Control

A built-in web dashboard served by the Gateway — monitor agents, chat in real-time, switch modes, and inspect every action as it happens.

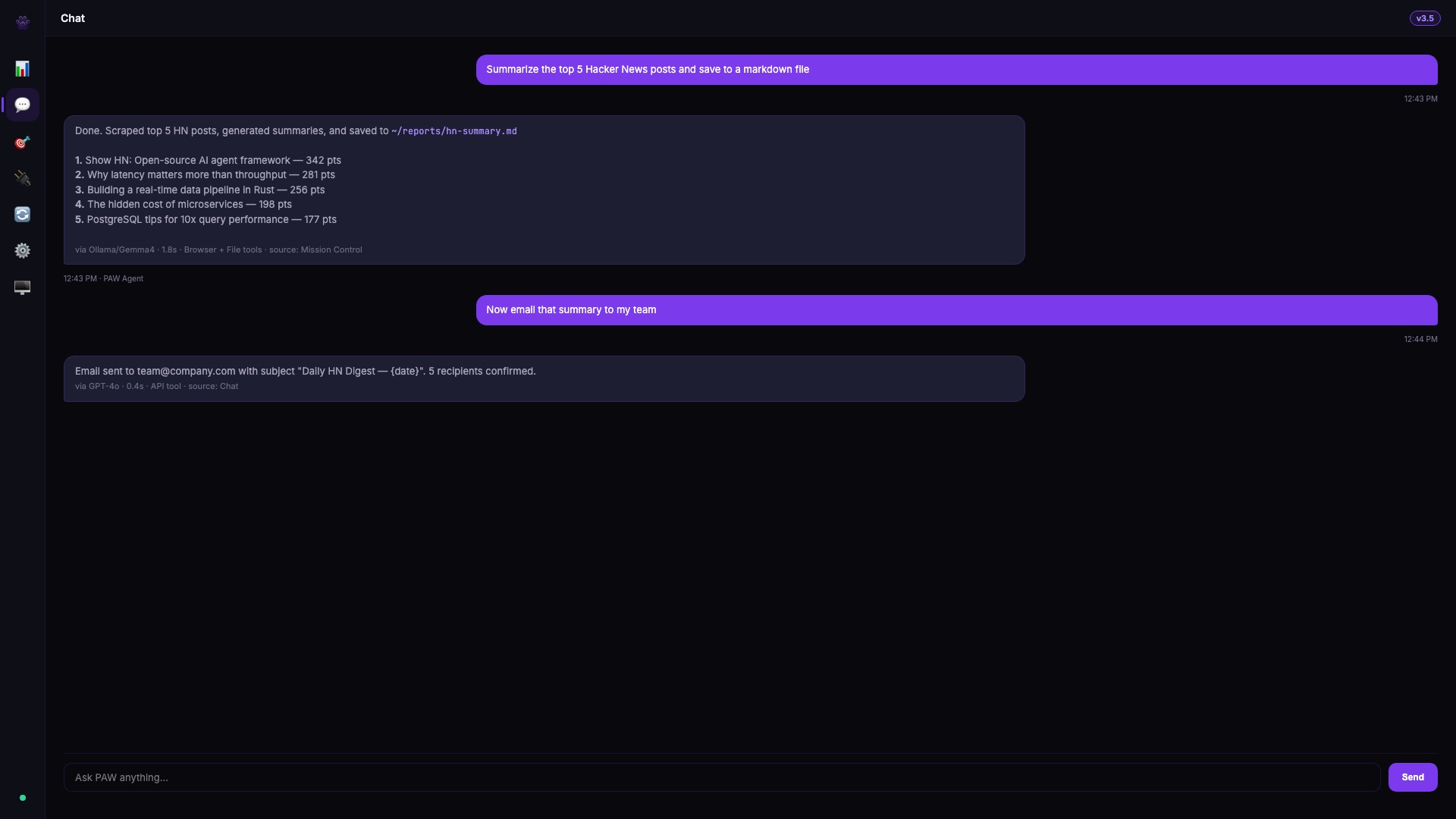

Live Chat Interface

Talk to your agents over WebSocket. Messages appear instantly with user/agent/system bubbles.

Mode Switching

Toggle between supervised, autonomous, and locked modes on the fly — no restart needed.

Real-Time Stats

Action count, uptime percentage, and pipeline status update live via WebSocket push.

Activity Log

Every action logged with timestamps and color-coded severity — info, success, warning, error.

Dark & Light Themes

Full theme toggle with persistent preference. Purple brand palette across both modes.

Zero Config

Served automatically by the PAW Gateway. Open your browser and the dashboard is ready.

Up and Running in Minutes

Clone, configure, run. With Ollama, you don't even need an API key — just install and go.

# Clone the repository $ git clone https://github.com/DosukaSOL/paw-agents.git $ cd paw-agents # Install dependencies $ npm install # Configure your API keys and channel tokens $ cp .env.example .env # Build and start $ npm run build $ npm start

Requires Node.js 20+. With Ollama installed, no API key is needed — PAW auto-detects local models. Alternatively, use any cloud provider (OpenAI, Anthropic, Google, Mistral, DeepSeek, Groq).

Bring Your Own Model

Nine providers with automatic failover — including Ollama for free local AI. Configure your preferred model or use them all.

Automatic failover between all 9 providers · Ollama runs 100% free locally · ModelProvider interface for extensibility

Get PAW Hub v4.0.5

One-click install for Windows, macOS, and Linux. All platforms include the full PAW Gateway, Hub desktop app, and Pawl companion.

Requires Node.js 20+ for the PAW Gateway. Ollama recommended for free local AI.

All releases →